How Big Tech’s Content Moderation Policies Could Jeopardize Users in Authoritarian Regimes

Social media advocates have historically lauded its ability to facilitate democratic progress by connecting people over space and time, enabling faster and wider mobilization than ever before. However, in recent years, this optimism has faded, and platforms have also become effective tools for dictators looking to spread disinformation and propaganda. Today, the social media ecosystem is a hotly contested sphere of political influence rife with disinformation, hate speech, and even violence.

Despite being owned and operated by private companies based largely in the United States, these platforms play an important role in public life worldwide. The battles for influence between democratic and authoritarian actors on social media can make or break attempts at democratic transition. As such, the decisions made by these big tech companies can have significant consequences in countries around the world, especially in authoritarian countries with burgeoning democratic movements.

Defending Freedom of Expression: The Need for Transparency and Consistency

Global audiences are becoming increasingly aware of the threat of false information on social media. A 2020 survey by the Reuters Institute for the Study of Journalism showed that 40 percent of respondents are more concerned about encountering false information on social media than other online sources of information. While this may justify efforts by social media companies to aggressively moderate online content, the reality of the issue is more complex.

These social media companies have proven inconsistent in how they apply content moderation systems, and their decisions about what content is displayed as well as their overall lack of transparency should be of grave concern to users everywhere. Erratic moderation policies have sometimes resulted in the censorship of legitimate investigative news content and important health information by social media algorithms.

Katherine Chen, a Facebook Oversight Board member, is critical of the content moderation policy that guides the company. In an interview with Reuters, she states that the Board “can see that there are some policy problems at Facebook.” She argues for the establishment of policies, particularly those involved in human rights and freedom of speech, that are “precise, accessible, clearly defined.”

Uganda: How Facebook “Caused” an Internet Blackout

Recent events in Uganda are an example of the dangers of these unilateral decision-making processes. Days ahead of the elections in Uganda in January, President Museveni announced a ban on Facebook and other social media platforms. Addressing the nation in Kampala, Museveni accused Facebook of political bias against the ruling party, the National Resistance Movement (NRM), and “arrogance.”

The move was prompted by Facebook’s decision to take down a network of pro-Museveni accounts in the run-up to the presidential election, claiming they were fake accounts linked to the ministry of information. “I told my people to warn [Facebook] . . . If it is to operate in Uganda, it should be used equitably,” Museveni said. “If you want to take sides against the NRM, then that group would not operate in Uganda. Uganda is ours.” Facebook defended its decision to ban the accounts, insisting that an investigation had revealed their involvement in a coordinated effort to undermine political debate in Uganda.

Regardless of the merits of the investigation, many open internet advocates argue private corporations do not have unilateral power over the ‘public realm’ and must consider local circumstances and political nuances in their moderation decisions.

As Odanga Madung, an internet policy researcher based in Kenya, told the BBC: “Any casual observer of Ugandan politics expected the government to impose internet restrictions ahead of the elections, so Facebook’s decision—especially the absence of tact when punishing infringements of its terms of service—offered Museveni a timely ruse to clothe the inevitable shutdown as a retaliation.”

While controversial content moderation decisions from Facebook and Twitter might garner criticism in thriving democracies, they can be met with much harsher consequences in more authoritarian countries where decision-making is concentrated in few hands. In these contexts, companies are often left with no choice but to do the government’s bidding, lest they end up restricted or outright blocked. In either case, users are left to deal with potentially significant repercussions, such as restricted access to the internet, which can impact their businesses and livelihoods.

In the Ugandan case, citizens bore the brunt of Facebook’s decision. Internet freedom monitor NetBlocks found that the five-day internet shutdown in Uganda cost the economy around $9 million (approximately 33 billion Ugandan Shillings), disproportionally affecting poor Ugandans who often rely on internet-based mobile applications to run their businesses.

The Global Implications of Content Moderation Decisions

Content moderation decisions by social media giants have also sparked an intense debate about free speech globally. The seemingly inconsistent decisions made by social media platforms have been used by authoritarian leaders to cloak their attempts to silence opponents—whether by blocking social media access or shutting down the internet altogether—in a thin veneer of legitimacy.

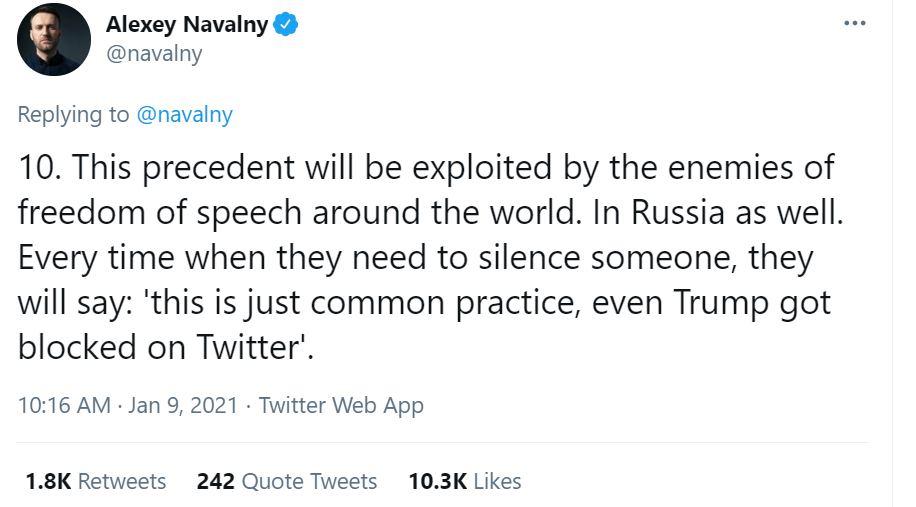

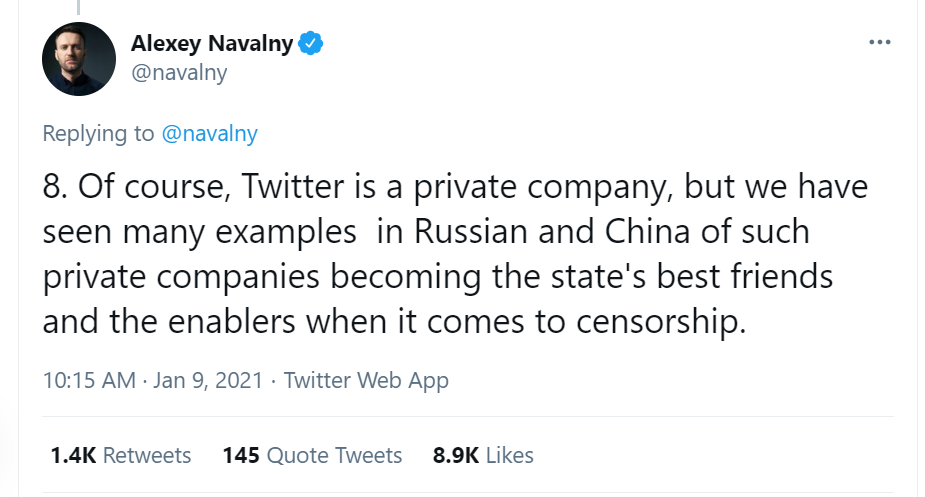

In response to Twitter’s ban of then-President Trump, jailed Russian opposition leader Alexey Navalny criticized the platform’s seemingly arbitrary decision and warned that it could potentially help authoritarians stifle dissent.

And it is clear that silencing online dissent is a priority for authoritarian leaders around the world. In the past, radio and television stations were often among the first sources of public information to be targeted during coups and revolutions. Today, it is the internet. Citing misinformation and fake news to claim an outsized gatekeeper role over the internet provides dictators a powerful political weapon. And there is certainly no shortage of dictatorial leaders willing to use internet shutdowns as a political cudgel. For instance, a 2019 report, by the Collaboration on International ICT Policy in East and Southern Africa (CIPESA) showed that since 2015 up to 22 African governments had ordered network disruptions.

The more recent actions of military leaders in Burma in the immediate aftermath of their coup are cases in point that demonstrate how important control over online spaces is to broader authoritarianism. The morning after the military takeover, citizens reported decreased internet connectivity and days later access to Facebook (the primary means of internet access for many Burmese citizens), as well as other Facebook-owned services like WhatsApp and Instagram, was blocked. In justifying the ban, officials from the new regime claim Facebook is used to spread “fake news and misinformation . . . [that is] causing misunderstanding among people,” which could lead to further unrest.

The risk of giving authoritarian leaders an excuse to shut down the internet is not the only potential threat posed by the lack of transparency in content moderation decision-making processes. Over the last decade, social media companies have emerged at the center of a political firestorm in many countries. The danger that these private corporate firms could come under undue influence from authoritarian regimes to stifle dissenting voices and opposition groups demonstrates an urgent need for an independent, fair, and transparent content moderation system.

Some of these platforms have already demonstrated their willingness to comply with authoritarian governments’ efforts to censor critics. Instagram recently caved to the Russian telecom regulator’s demands that it remove content related to opposition activist Alexei Navalny’s anti-corruption investigation. Facebook has also previously complied with arcane censorship laws and blocked anti-government content in several countries, including Vietnam, Morocco, and India.

The Path Forward—a Rights-respecting Content Moderation Regime

Currently, Twitter is piloting its “BirdWatch,” a community-driven approach to addressing misleading information on its platform. Facebook has introduced an independent Oversight Board, which will review Facebook’s content moderation decisions and offer binding recommendations on whether or not to uphold them.

Yet, social media companies will need to take more decisive action to improve public confidence in the wake of their sluggish attempts to halt the spread of misinformation and their acquiescence to censorship demands from authoritarian governments. To that end, they must set up regional rapid response units with a commitment to understanding the local political and social context to avoid decisions that play into the hands of authoritarian regimes.

These independent, localized units will also be important in addressing the overwhelming and varied forms of complaints received by big tech companies, ranging from appeals decisions to take-down requests from governments and users.

As big social media companies have taken on an increasingly central role in the public sphere, the intertwinement between private companies and international politics has become increasingly complicated. Laws enacted in the United States and Europe to regulate social media platforms may not apply elsewhere, particularly the Global South. A content moderation regime that is not inclusive, has a slow response rate, and fails to factor in local, regional, and political contexts is ultimately failing to overcome the challenges it aims to address.

Access to information and freedom of expression, including the public conversation on social media, are a vital part of strong democratic processes. Social media companies have an enormous responsibility to respond to demands for greater accountability.

Gideon Sarpong is a 2020/21 Open Internet for Democracy Leader. He is a co-founder of iWatch Africa and a Policy Leader Fellow at the European University Institute, School of Trans-national Governance in Florence, Italy.